How to Choose AI Hardware for Performance, Efficiency, and Scale

Author:sana

Released:January 8, 2026

The "Compute Wall" is real, and most companies are hitting it head-first. You’ve built a great model, your data is clean, and your API is ready. Then you see the bill. Or worse, your latency makes the user experience feel like 1998 dial-up. The bottleneck isn't your code; it's the silicon.

Training modern AI systems has become extremely expensive. Estimates suggest that training frontier AI models can cost tens of millions of dollars in compute alone, with hardware accounting for the largest portion of that expense.

AI hardware is no longer just about "bigger is better"; you need the right tool for the task. Many companies waste money because GPU utilization can drop to 20–40%, paying for far more compute capacity than they actually use.

Here’s how to make smarter hardware choices so you get the performance you need without overspending.

The AI Compute Crisis: Why Your Current Setup is Failing

The math is simple but brutal. Large Language Models (LLMs) grow exponentially, but traditional CPU performance only crawls forward. Even general-purpose GPUs are starting to show their age.

We’re no longer just “running compute”; we need hardware designed specifically for heavy AI workloads, and old approaches can be painfully inefficient.

- Memory bandwidth is often the hidden bottleneck.

When running large models such as Llama-class architectures, a significant portion of time can be spent waiting for data to move between memory and the processor. This bottleneck, often called the “memory wall” can significantly increase inference latency and cost. This "memory wall" is why your inference costs are skyrocketing.

- Power consumption is the second silent killer.

A single high-end AI rack can pull as much power as a small neighborhood. If your hardware isn’t optimized for efficiency, your operating costs can quickly eat into profits before you scale.

Choosing the Right Silicon: It’s Not Just NVIDIA Anymore

Everyone wants the NVIDIA H100, and for good reason. It is widely considered the gold standard for large-scale AI training. But it is also extremely expensive: a single H100 GPU can cost $25,000–$40,000, and an 8-GPU server can exceed $300,000.

For many production tasks, an H100 is overkill; cheaper, specialized chips often perform just as well for inference or smaller workloads.

You need to distinguish between training and inference. Training requires massive clusters; inference for users demands speed and efficiency. Purpose-built accelerators like Google TPU v5p or AWS Inferentia2 handle these tasks at a lower cost and with less wasted energy.

Don't ignore the "underdogs." Companies like Groq are using LPU (Language Processing Unit) architectures to achieve staggering tokens-per-second. If your application requires real-time voice or lightning-fast chat, looking beyond the "Green Giant" (NVIDIA) is a strategic necessity.

The Rise of Specialized Architectures

Modern AI chips aren't just faster; they are smarter about how they handle numbers. We’ve moved past standard 32-bit floating-point math. Today’s winners use mixed-precision (FP8 or even INT4) to squeeze more performance out of the same silicon.

Look for chips that support "Sparsity." This is a technique where the hardware skips the "zeros" in a neural network. Since AI models are often 50-80% zeros, hardware that can ignore them effectively doubles your speed without increasing your power draw.

Memory is the New Currency

For large AI models, the real bottleneck is often memory bandwidth, not compute. This is why modern accelerators rely on High Bandwidth Memory (HBM) placed directly next to the processor. Newer HBM3e stacks can deliver more than 1.2 TB/s of bandwidth, allowing GPUs to feed data to thousands of cores without constant stalls.

When comparing chips, always check the HBM capacity and bandwidth. If your model cannot fit in GPU memory, the system will constantly swap data between devices or system RAM, a performance penalty that can destroy inference speed.

Data Center Evolution: It’s All About the Interconnect

A single chip is a brick if it can't talk to its neighbors. The "cluster" is the new unit of compute. This is why networking technologies like InfiniBand and Ultra Ethernet are becoming as important as the chips themselves.

If you are building an in-house cluster, you must solve the "thermal challenge" first. Standard air cooling won't cut it for a rack of H200s. You are looking at liquid cooling or direct-to-chip cooling. If your facility isn't ready for this, stick to the cloud.

The Hybrid Cloud Strategy

I always recommend a hybrid approach. Use the Microsoft Azure AI or Google Cloud for your massive training runs where you need 10,000+ GPUs. But for steady-state inference, consider "bare metal" or specialized AI cloud providers like Lambda Labs or CoreWeave.

Specialized providers often offer better "performance per dollar" because they don't carry the overhead of a general-purpose cloud. They are built by AI engineers for AI engineers. If you are spending more than $50,000 a month on compute, the price difference becomes significant.

Software-Hardware Co-Design: The Secret Sauce

Hardware is useless without a compiler that knows how to use it. This is why PyTorch has become the industry standard. It abstracts the hardware complexity, but you still need to optimize.

If you are running on NVIDIA, you should be using TensorRT. If you are on a TPU, you need XLA (Accelerated Linear Algebra). If you just "plug and play" a model from Hugging Face without hardware-specific optimization, you are leaving half your performance on the table.

The Quantization Trick

You can often shrink a model by 4x with almost zero loss in accuracy. This process, called quantization, allows a massive model to fit on cheaper, smaller chips. It’s the difference between needing an $80,000 server and a $5,000 workstation.

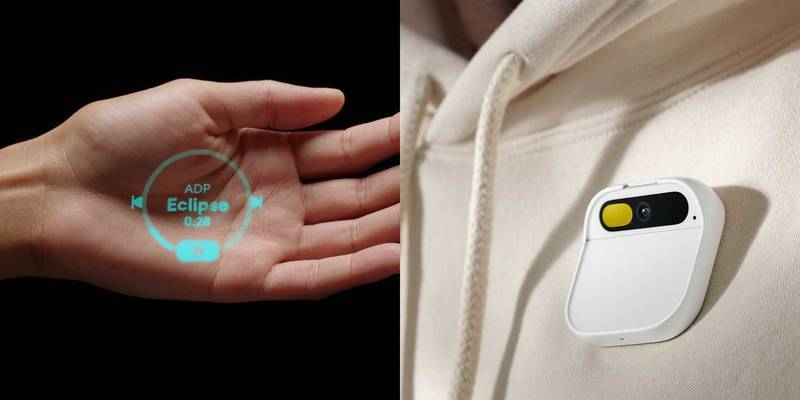

Edge AI: Bringing Intelligence to the Device

Not all AI belongs in the cloud. For autonomous vehicles, drones, or privacy-sensitive medical tech, you need "Edge AI." These are low-power chips (NPUs) that live inside your phone or car.

The challenge here is the "Power Envelope." You might only have 5 or 10 watts to work with. Companies like Qualcomm and Apple are leading the way here, integrating powerful AI accelerators directly into their SOCs (System on a Chip).

If you are developing a mobile app, don't default to an API call. Check if the user's device can run the model locally. It’s faster for them and free for you. Apple's CoreML makes this surprisingly easy to implement.

The Future: Neuromorphic and Optical Chips

Traditional silicon scaling is slowing, pushing researchers to explore new computing architectures. Neuromorphic chips mimic the human brain by using networks of artificial neurons and synapses that only activate when signals arrive. This event-driven design uses very little energy when idle and draws power mainly during computation.

Processors such as Intel Loihi 2 run spiking neural networks more efficiently than conventional GPUs, which makes them suitable for robotics, edge devices, and real-time sensing systems.

Optical or photonic computing approaches computation differently, using light instead of electricity. Companies like Lightmatter and Lightelligence are developing accelerators that handle matrix multiplications, a core operation in AI, through optical interference.

Because photons produce much less heat than electrons, these systems can improve performance per watt and reduce cooling needs in large data centers. Early commercial testing is already underway, suggesting these technologies may play a role in future AI infrastructure.

Leading tech companies are already applying vertical integration to maximize efficiency. Tesla built its own Dojo chips to handle the video-processing demands of Full Self-Driving rather than relying on standard hardware. Google did the same with its TPU, allowing them to offer services like Gemini at a cost competitors struggle to match.

The lesson is clear: designing or choosing hardware with your specific workload in mind can deliver significant advantages and reduce dependence on expensive off-the-shelf GPUs.

Avoiding the "Hype" Traps

Do not rely too heavily on headline metrics like total TFLOPS when evaluating AI hardware. TFLOPS measures theoretical floating-point performance, but it often says little about how a system performs in real AI workloads.

What matters more in production is practical throughput and cost efficiency. For language models, a more meaningful metric is tokens per second per dollar, which reflects both inference speed and infrastructure cost.

In many cases, a well-optimized $10,000 setup can outperform a $40,000 system if the model is properly quantized and the software stack is tuned to the hardware.

Vendor lock-in is another risk that teams often underestimate. Many accelerator platforms rely on proprietary compilers, runtime libraries, or programming frameworks tied to a single chip vendor. If your training and inference pipeline depends entirely on those tools, switching hardware later can become expensive and time-consuming.

To keep flexibility, many teams build their pipelines around open frameworks such as OpenXLA or the Triton Inference Server, which support multiple hardware backends and make future migrations far easier.

Rethinking How You Use Hardware

The hardware world moves faster than the software that runs on it. What worked six months ago may already be outdated. Success isn’t about having the most GPUs; it’s about using the right hardware for the job.

Stop treating compute as just another utility. Look closely at your current inference costs, experiment with techniques like quantization, and be open to alternatives beyond the usual options.

The goal isn’t just to run AI. It’s to run it efficiently, cost-effectively, and faster than your competitors. Choosing and using the right silicon wisely is the foundation for achieving that.